Patroni and HAProxy Agent Checks

Using HAProxy agent checks to clean up your Patroni load balancing

Development, Systems Administration

Table of Contents

Patroni is a wonderful piece of technology. In short, it allows an administrator to configure a self-healing and self-managing replicated PostgreSQL cluster, and quite simply at that. With Patroni, gone are the days of having to manage your PostgreSQL replication manually, worrying about failover and failback during an outage or maintenance. Having a tool like this was paramount to supporting PostgreSQL in my own cluster, and after a lot of headaches with repmgr finding Patroni was a dream come true. If you haven’t heard of it before, definitely check it out!

Once you have a working Patroni cluster, managing client access to it becomes the next major step. And probably the easiest (and, in their docs, recommended) method to do so is using HAProxy. With its integrated health checking and simple load balancing, an HAProxy-fronted Patroni cluster provides the maximum flexibility for the administrator while seamlessly handling failovers.

The problem - Do you like DOWN hosts?

However, the official HAProxy configuration template has a problem - in a read-write backend, you want your non-primary hosts to be inaccessable to clients, to prevent write attempts against a read-only replica. However this configuration results in the replica hosts being marked DOWN in HAProxy.

Now, some people might ask “well, why is that a big deal”? And they may be right. However, as soon as you start trying to monitor your HAProxy backends via an external monitoring tool, you see the problem: “CRITICAL” alerts during normal operation! After all, a DOWN host is considered a problem in 99.9% of HAProxy usecases. But with Patroni, it’s expected behaviour, which is not ideal.

So what can we do?

HAProxy’s agent-check directive

HAProxy, since at least version 1.5, supports a feature called agent-check. In short, this “enable[s] an auxiliary agent check which is run independently of a regular health check”. The agent-check will connect to a specific port on either the backend host or another target, and will modify the backend status based on the response, which must be one of the common HAProxy keyworks (eg. MAINT or READY).

So how does this help us? Well, if we had some way to obtain Patroni’s primary/replica status for each host, we could, instead of having the replica machines marked DOWN, put them into MAINT mode instead. This provides cleanliness for monitoring purposes while still letting us use the typical Patroni HAProxy configuration, with just minimal modifications to the HAProxy configuation and deploying an additional daemon on the Patroni hosts.

The Code - Python 3 daemon

The following piece of code is a Python 3 daemon I wrote that uses the socket and requests (requires the python3-requests package on Debian, or requests via pip3) libraries to:

- Listen for the agent check on port

5555. - In response to a request, query Patroni’s local API to determine that host’s

role. - Return

MAINTorREADYto HAProxy based on the role.

Here is the code - I’m sure it can be improved significantly but it works for me!

#!/usr/bin/env python3

# Simple agent check for HAProxy to determine Patroni primary/replica status

import socket, requests

# Make sure we clean up when we fail

def cleanup(e):

print(e)

conn.close()

sock.close()

exit(1)

# External port to listen on and report status to HAProxy

listen_port = 5555

# Location of the Patroni API

data_target = 'http://localhost:8008'

# Get the current role from Patroni's API

def getstate():

try:

r = requests.get(data_target)

except:

return 'null'

data = r.json()

role = data['role']

return role

# Set up a listen socket on listen_port

sock = socket.socket(socket.AF_INET, socket.SOCK_STREAM)

sock.bind(('', listen_port))

sock.listen(1)

# Loop waiting for client requests in blocking mode

while True:

conn, addr = sock.accept()

state = getstate()

# Set our response based on the state; only `primary` should be READY in read-write mode

if state == 'primary':

data = b'READY\n'

else:

data = b'MAINT\n'

# Send the data to the client

try:

conn.sendall(data)

conn.close()

except Exception as e:

cleanup(e)

Running the daemon with systemd

Running the above Python code is really simple with systemd. I use the following unit file, assuming the above code is located at /usr/local/bin/patroni-check.

# Patroni agent check systemd unit file

[Unit]

Description=HAProxy agent check for Patroni status

After=syslog.target network.target patroni.service

[Service]

Type=simple

User=postgres

Group=postgres

StartLimitInterval=15

ExecStart=/usr/local/bin/patroni-check

KillMode=process

TimeoutSec=30

Restart=on-failure

[Install]

WantedBy=multi-user.target

This is a really straightfoward unit with one deviation - StartLimitInterval=15 is used to prevent the daemon from restarting immediately on failure. In my experience (probably a n00b error), Python doesn’t properly clean up the socket immediately, leading to the daemon blowing through its ~5 restart attempts in under a second and failing every time with an “Address already in use” error. This interval gives some breathing room for the socket to free up. And luckily, HAProxy won’t change the state if the agent check becomes unreachable, so this should be safe.

Enable it in HAProxy

Now finally, configure your HAProxy backend to use the agent check. Here’s my (live) config for a read-write backend:

backend mast-pgX_psql_readwrite

mode tcp

option tcpka

option httpchk OPTIONS /primary

http-check expect status 200

server mast-pg1 mast-pg1:5432 resolvers nsX resolve-prefer ipv4 maxconn 100 check agent-check agent-port 5555 inter 1s fall 2 rise 2 on-marked-down shutdown-sessions port 8008

server mast-pg2 mast-pg2:5432 resolvers nsX resolve-prefer ipv4 maxconn 100 check agent-check agent-port 5555 inter 1s fall 2 rise 2 on-marked-down shutdown-sessions port 8008

server mast-pg3 mast-pg3:5432 resolvers nsX resolve-prefer ipv4 maxconn 100 check agent-check agent-port 5555 inter 1s fall 2 rise 2 on-marked-down shutdown-sessions port 8008

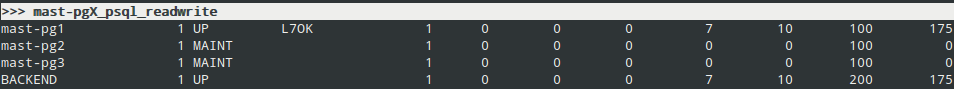

And here it is in action:

Conclusion

I hope that this provides some help to those who want to use Patroni fronted by HAProxy but don’t want DOWN backends all the time! And of course, I’m open to suggestions for improvement or questions - just send me an email!

UPDATE 2024-12-01: Updated various instances of master to primary to reflect changes in Patroni since this post was originally written. Thanks to [Gary T. Giesen][https://fosstodon.org/@ggiesen] for pointing these out!